A core part of the Portal experience is our immersive visuals, we put a lot of effort into capturing these in the highest quality possible because we know how much an immersive window can help people reconnect with nature.

When filming on location we give careful consideration to the framing of a scene. As well as being aesthetically pleasing, the perfect framing can help ground you in a place, give a sense of scale and bring realism to the soundscape.

When Portal first launched we only supported iPhone in portrait orientation, this meant we could confidently compose and crop our shots to be optimised for displaying perfectly on these devices. Later came iPad in landscape orientation, a change we supported by manually cropping each shot for both device sizings.

Back in 2019 even mid-range mobile devices would struggle to playback large high-resolution video files smoothly. Our approach at this time was to encode individual video files that were exactly matched to each device’s screen resolution. This meant smaller downloads, less video processing on-device and complete editorial control over the video cropping.

This approach served us well for 4 years but unfortunately it hasn’t scaled to meet our growing needs:

We needed a solution that allowed us to dynamically scale and crop our visuals to any possible sizing which is only really possible by shifting the cropping to happen in realtime on device.

For our Mac app we now deliver our visuals in a handful of standard resolutions and then perform cropping on device to the size needed. This allows us to fluidly transition to different screen and window sizes on-demand.

Luckily for us computers have got much better at video decoding and all modern Macs now include hardware video decoders that means we can do this without excessive CPU usage.

This solution works well and allows us to scale the size of visuals to every possible situation. The major drawback here is that we lose creative control over the composition of a scene when it is used at different aspect ratios.

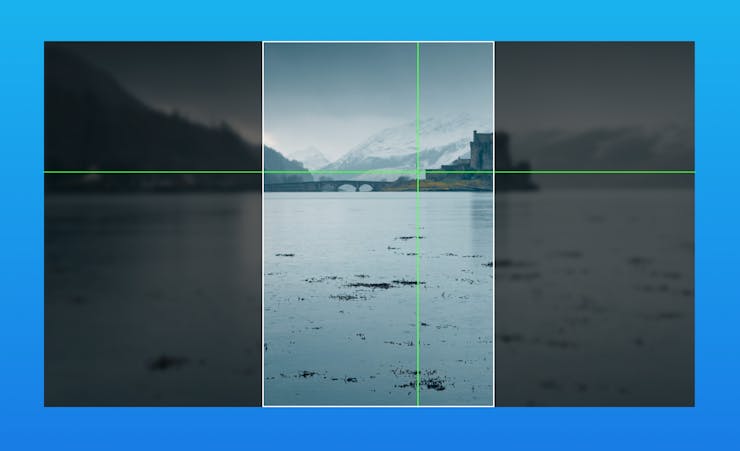

The default (and only) behaviour for video playback on Apple devices is to fill the frame with the video positioned in the middle of the frame.

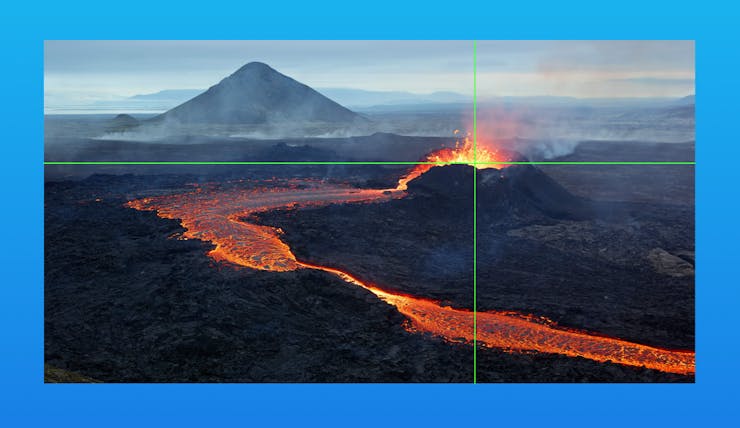

This works fine for portals where the subject of the scene is in the centre of the visuals but not so well when the subject is off-centre, as in this example.

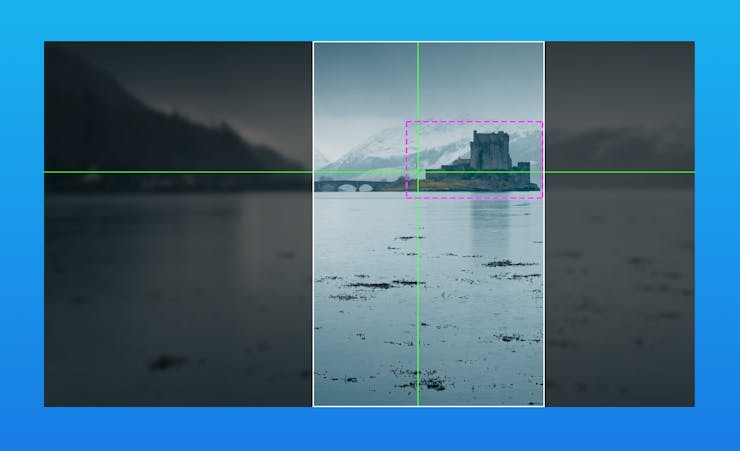

To solve this issue we introduced the concept of a “focus target” this is an X,Y co-ordinate that identifies the centre of gravity that is used for cropping operations.

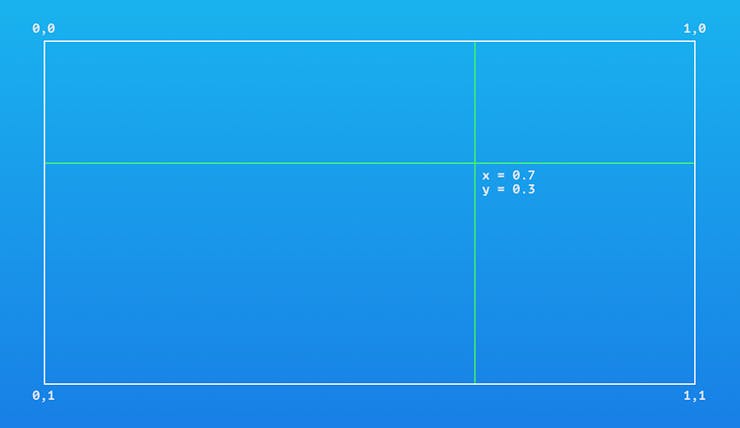

Because videos can be delivered in different absolute sizes the focus target is specified in a 0-1 co-ordinate space. With 0,0 representing top left, 1,1 representing bottom right and 0.5,0.5 being the centre-point.

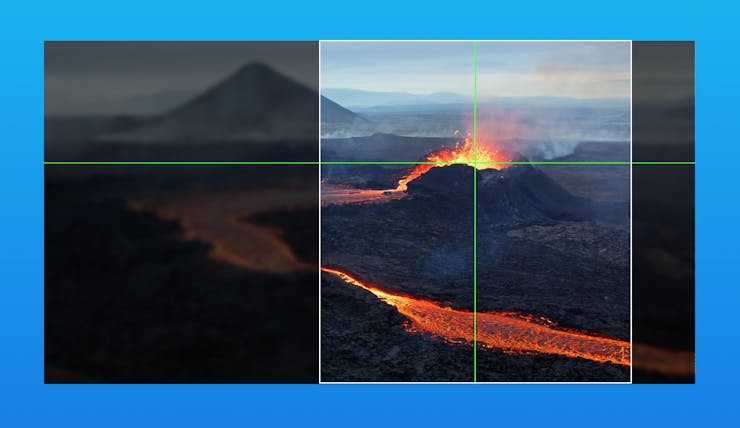

Using this focus target data we can weight the cropping to pull focus towards the subject of the image.

This really helps improve the crop, but can you see what happened in the example above? We went from a composition where the subject was 2/3rds into the image to one where it was placed in the centre of the crop.

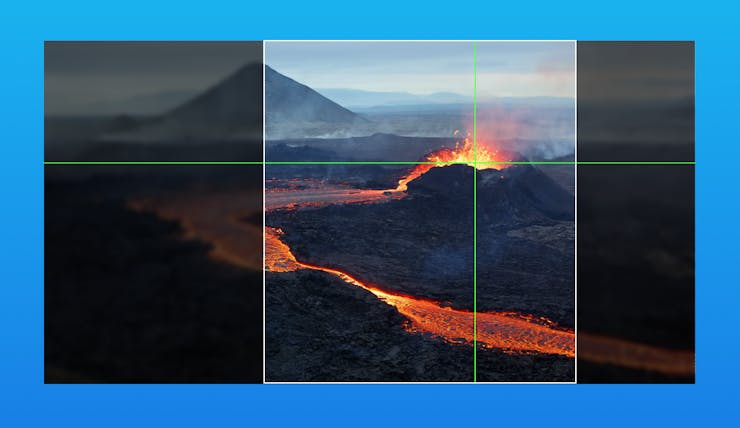

To solve this we have to maintain the offset of the focus target in the cropped image. This allows us to maintain the original composition as closely as possible.

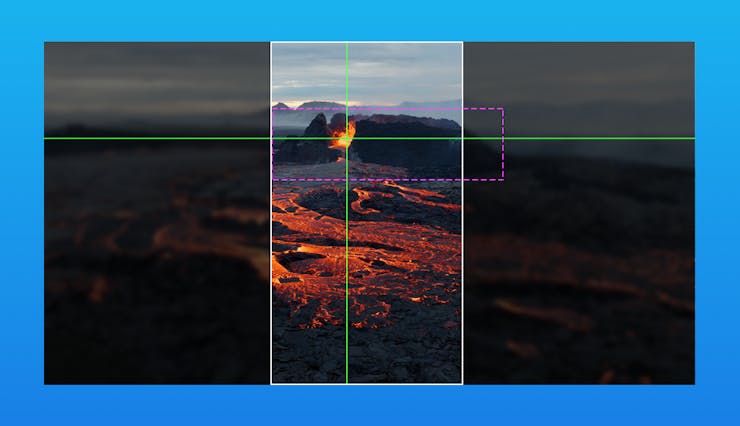

Unfortunately we now have another problem. For larger subjects and narrower crops this can lead to awkward partial cropping of the subject.

To solve this we have to introduce a second concept. We call it a “focus boundary”. This is a box that can be drawn around the subject to protect it from partial cropping. It is expressed as (x,y,width,height) in the same relative co-ordinate system as the focus target.

Our algorithm now uses the boundary to nudge the ideal subject placement until it entirely fits into the frame.

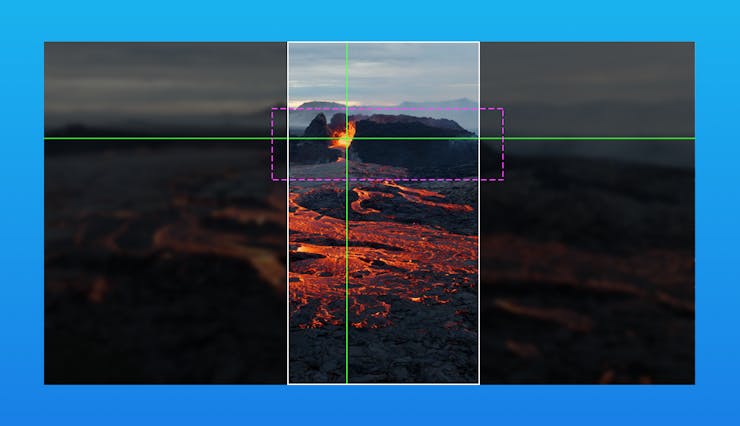

Once this is accounted for we can confidently use videos in many different aspect ratios and be assured we’ll get the best crop we possibly could. But we still have one last edge case to deal with; what happens when the focus boundary is larger than the frame we are trying to fill?

In this situation we have no option but to break the boundary rules that we set and to start cropping into it. But how do we know which side to crop from? Our solution to this problem is to crop an amount from each edge that is weighted by the position of the focus target relative to the focus boundary. This has the effect of pulling the focus target further towards the centre of the frame as the crop gets narrower and in our trials gave the best balance possible.

At this point we have an algorithm that:

Of course in the Portal app we have to ensure this all plays nicely with multiple screens, animations, parallax and seamlessly transitioning between images, videos & blurs. Things are a little more complicated in the real world!

These changes are available in Portal for Mac 1.3.0 or later. Customers with ultra wide displays or portrait orientation windows will likely see a big improvement in scene composition right now and we’ll be bringing these changes to our other platforms later in the year.

Hopefully this article has given you a little insight into one of the not so obvious details the Portal team spend our time sweating over to ensure every customer has the most immersive experience possible.